Closing the Loop: Building High-Trust AI Feedback Systems

Learn how to implement automated feedback loops and verification layers to build resilient, high-trust AI agent fleets in your organization.

As organizations scale their AI agent fleets, the primary bottleneck is no longer capability—it is trust. When autonomous systems interact with customers, handle data, or execute code, the risk of failure increases exponentially without robust feedback loops.

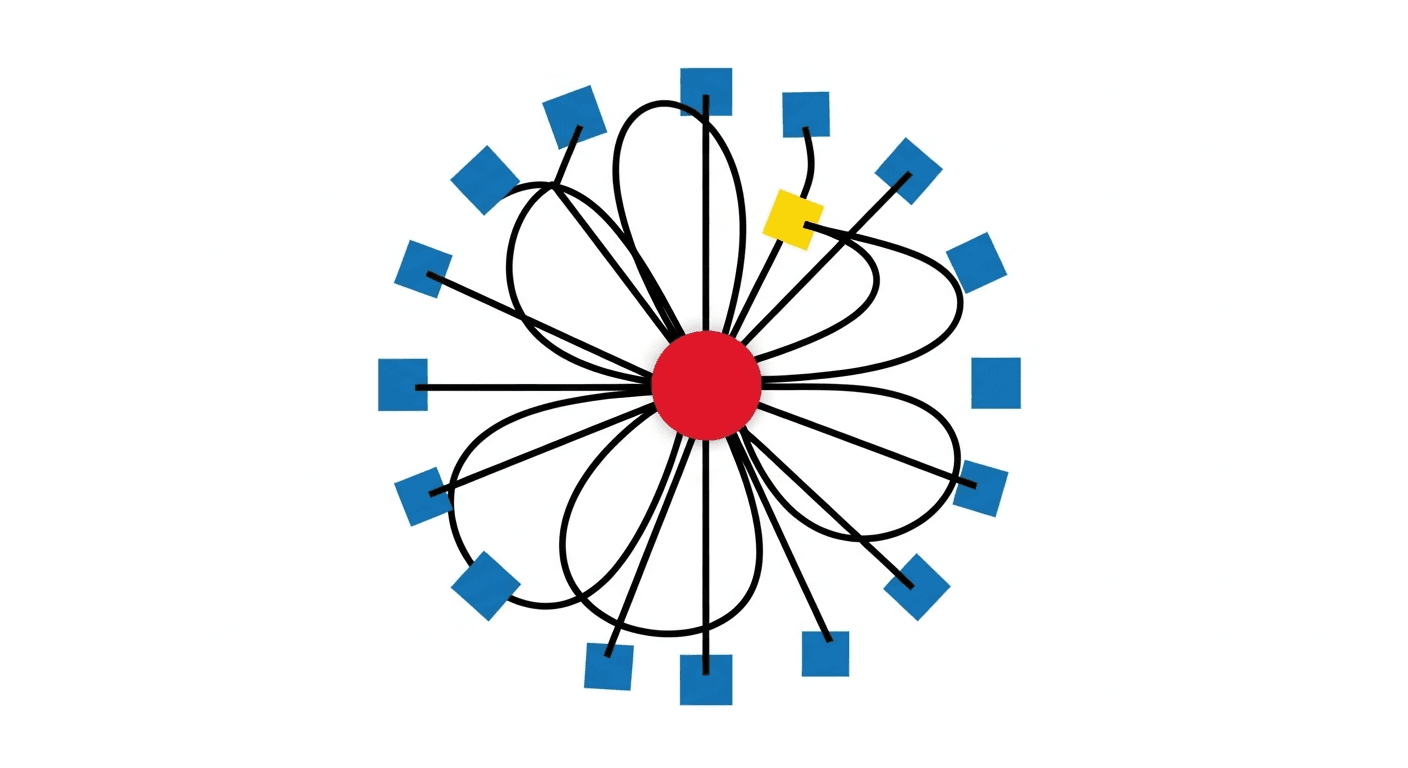

The Anatomy of an AI Feedback Loop

A high-trust AI system requires more than just a successful execution. It needs a multi-stage verification process that mirrors human oversight but at machine speed.

- Intent Verification: Ensuring the agent understands the goal before it acts.

- Execution Auditing: Recording every step taken, including tool calls and internal reasoning.

- Outcome Validation: Measuring the result against predefined success metrics.

- Continuous Learning: Feeding failures and successes back into the system to refine future prompts and logic.

Why Internal Links Matter for AI Trust

Building a fleet requires understanding how different agents interact. For instance, our Mira Agent Hierarchy provides the foundational structure for these interactions. Without a clear hierarchy, feedback loops can become circular and inefficient.

Implementing the Loop in 2026

Modern AI orchestration platforms now allow for "shadow execution," where a second agent audits the first in real-time. This verification layer is critical for high-stakes environments like finance or healthcare.

By implementing continuous verification, as we discussed in our guide on scaling trust in AI systems, you can ensure that your fleet remains aligned with corporate policy and security standards.

Conclusion

Closing the feedback loop is the difference between a collection of experimental scripts and a production-grade AI workforce. Start by identifying your most critical failure points and building verification layers around them today.