Building AI Agent Fleets: A Practical Strategy for Executive Automation

How tech leaders can move beyond experimental chatbots to building resilient, autonomous agent fleets that drive measurable business outcomes.

Let's get right to the point. Most executive teams are still treating AI as a search replacement or a sophisticated drafting tool. They are missing the asymmetric advantage of proactive automation. The real value isn't in a chatbot that answers questions; it's in a fleet of agents that execute work without being asked.

Transitioning from individual AI experiments to a production-grade fleet requires a shift in mindset. You are no longer managing tools; you are architecting a workforce. In my work building the Mira system, I've found that success comes down to three non-negotiable pillars: specialization, verification, and feedback loops.

Specialization Over Generalization

A common mistake is trying to build a "god-agent" that handles everything. This fails because general-purpose prompts are brittle. They hallucinate under pressure and struggle with edge cases.

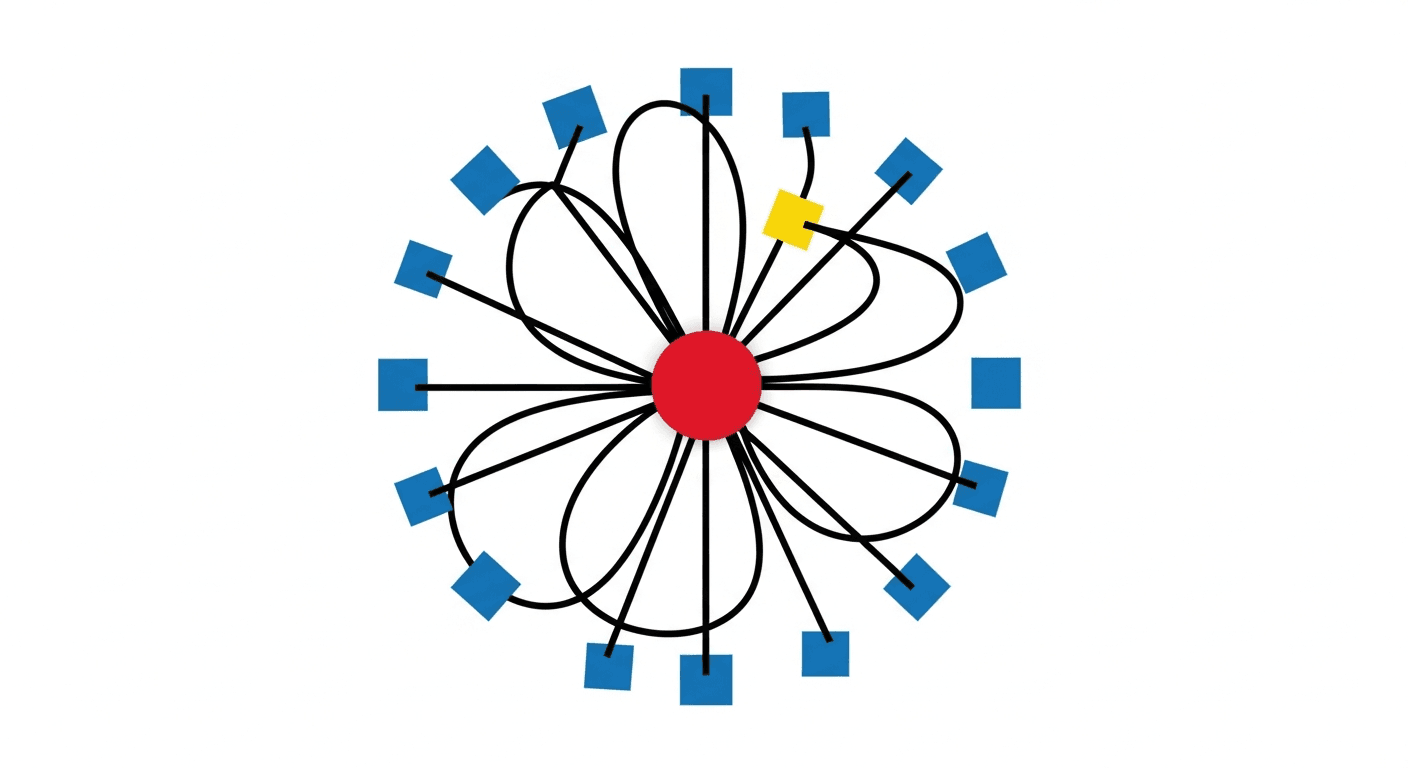

The correct posture is to build a hierarchy of specialized agents. Each agent should have a narrow scope, a specific set of tools, and a clear definition of success. For example, in our content infrastructure, we don't have one "marketing agent." We have agents for research, agents for drafting, and agents for technical verification. This agent hierarchy ensures that each component is operating at the edge of its capability safely.

The Verification Layer: Trust but Verify

Autonomous work is only useful if it's correct. As I've said before, trust but verify is not a defensive posture. It is the only way to scale.

Every action taken by an autonomous agent must be subject to a verification layer. This isn't just about checking the final output; it's about auditing the internal reasoning and tool calls. If an agent executes a shell command or writes to a database, that action should be logged, analyzed, and—in high-stakes environments—approved by a human or a more senior "supervisor" agent. This is the core of continuous verification.

Closing the Loop

A fleet that doesn't learn from its mistakes is a liability. You need automated feedback loops that feed execution data back into the system. When an agent fails, the failure shouldn't just be fixed; the underlying prompt, tool definition, or logic must be updated to prevent a recurrence.

Practical result: routine work happens without being asked, and the system becomes more resilient with every execution. This is how you move from "playing with AI" to "operating an AI-enabled business."

Getting Started

Start small. Identify one routine, high-volume process—like PR review, content distribution, or customer inquiry triage—and build a two-agent loop: one to execute and one to verify.

The question isn't whether you can afford to invest in this infrastructure. It's whether you can afford to remain reactive while your competitors are building proactive systems.

Jascha Kaykas-Wolff is the CEO of Visiting Media and author of "Growing Up Fast." He builds AI systems that work.